2.

AI-Powered Developer Productivity with Android Studio & Gemini

Written by Zahidur Rahman Faisal

Android Studio is being transformed into an AI-powered development environment, with Gemini deeply integrated to enhance developer productivity throughout the entire software development lifecycle. The sheer breadth of Gemini’s integration into Android Studio – from basic code completion to advanced crash report analysis and multi-file refactoring via Agent Mode – indicates Google’s ambition to make Android Studio the definitive AI-native development environment. It is not just adding AI features; it is embedding AI into the core fabric of the IDE. This deep integration will likely set a new standard for developer tools, pushing other IDEs and platforms to follow suit. It aims to create an unparalleled developer experience for Android, potentially attracting more developers to the platform and accelerating the pace of innovation.

Gemini in Android Studio

Gemini in Android Studio acts as a coding companion powered by AI, understanding natural language queries related to Android development. It aims to make building high-quality Android apps faster and easier.

These are the key features that Gemini brings in for developer productivity:

- Code Generation & Completion: Generates code snippets and provides intelligent, context-aware code completion.

- Code Transformation & Refactoring: Assists with transforming and refactoring existing code.

- Naming & Documentation: Helps with naming variables, methods, classes, and generating documenting code.

- UI Creation: Aids in creating Compose previews and even building app UI based on images (Image to Code).

- Testing & Debugging: Analyzes crash reports from App Quality Insights, provides summaries, recommends next steps, and assists in writing unit tests.

- Commit Messages: Helps draft descriptive commit messages.

- Contextual Assistance: Can offer to open relevant documentation pages and troubleshoot common errors. Developers can highlight code directly in the editor and ask Gemini questions.

Get started with Gemini

Getting help from Gemini within Android Studio is simple and straightforward! You can enable Gemini by following these steps:

- Download the latest version of Android Studio from here.

- Open the starter project and click View > Tool Windows > Gemini.

- A chat box will appear, where you can begin using Gemini’s conversational (aka Chat) interface. You may need to sign in to your Google Account, if you’re not already signed in.

The Gemini agent is powerful! It allows you to perform the following actions to increase your developer productivity and save time:

- Image attachment in Gemini chat.

- Providing file context to Gemini.

- Compose Preview generation.

- Transform an existing UI within Compose Preview.

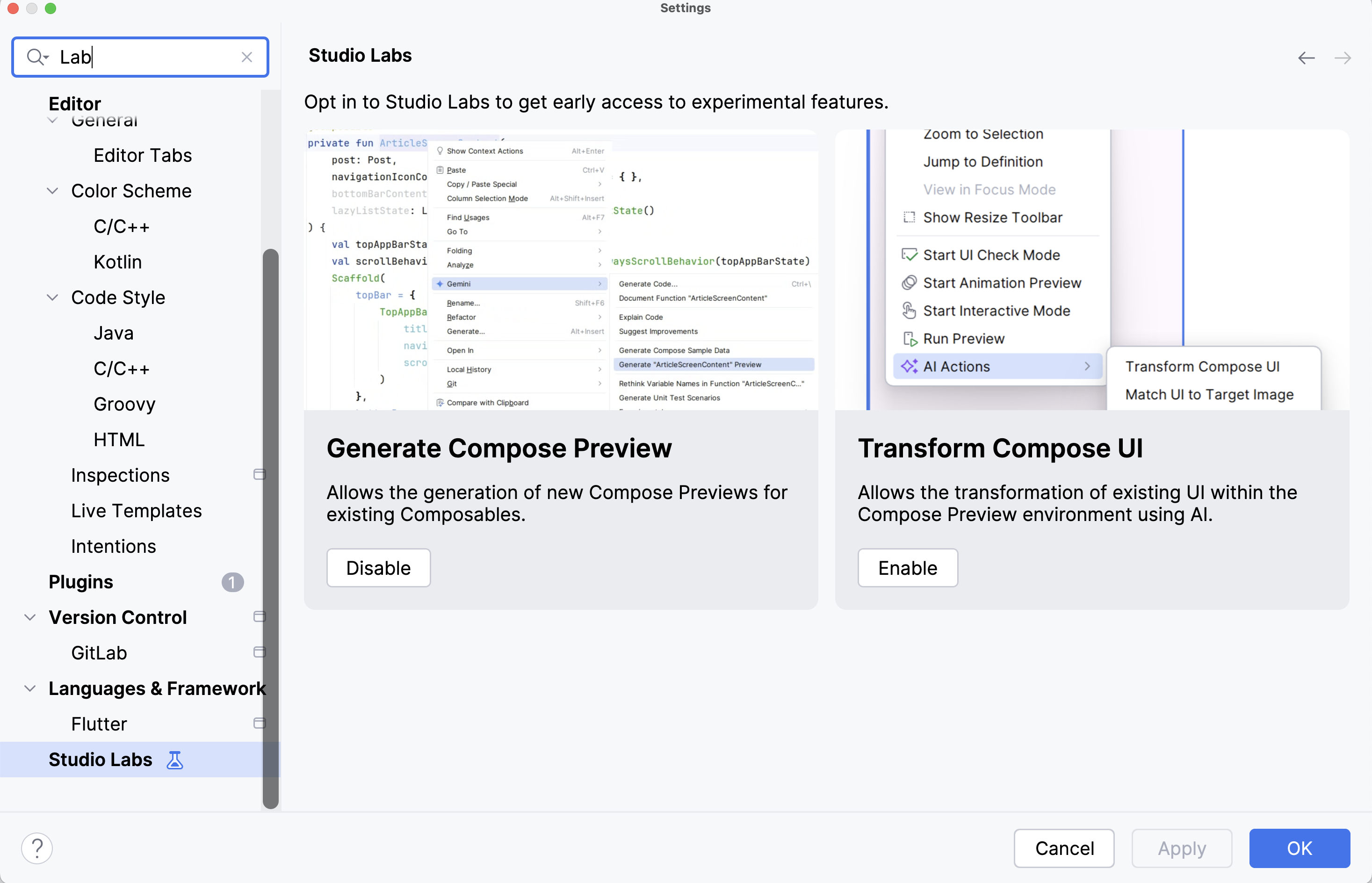

To unlock the full potential of Gemini, you need to enable Studio Labs. Studio Labs lets you discover and experiment with the latest AI features in the stable version of Android Studio.

Go to Android Studio > Settings… > Studio Labs and enable the features that you’d like to start using.

At the time of writing this book, Studio Labs offers these features that you’re going to use later in this chapter:

- Generate Compose Preview

- Transform UI with AI

AI-assisted coding with Gemini Chat

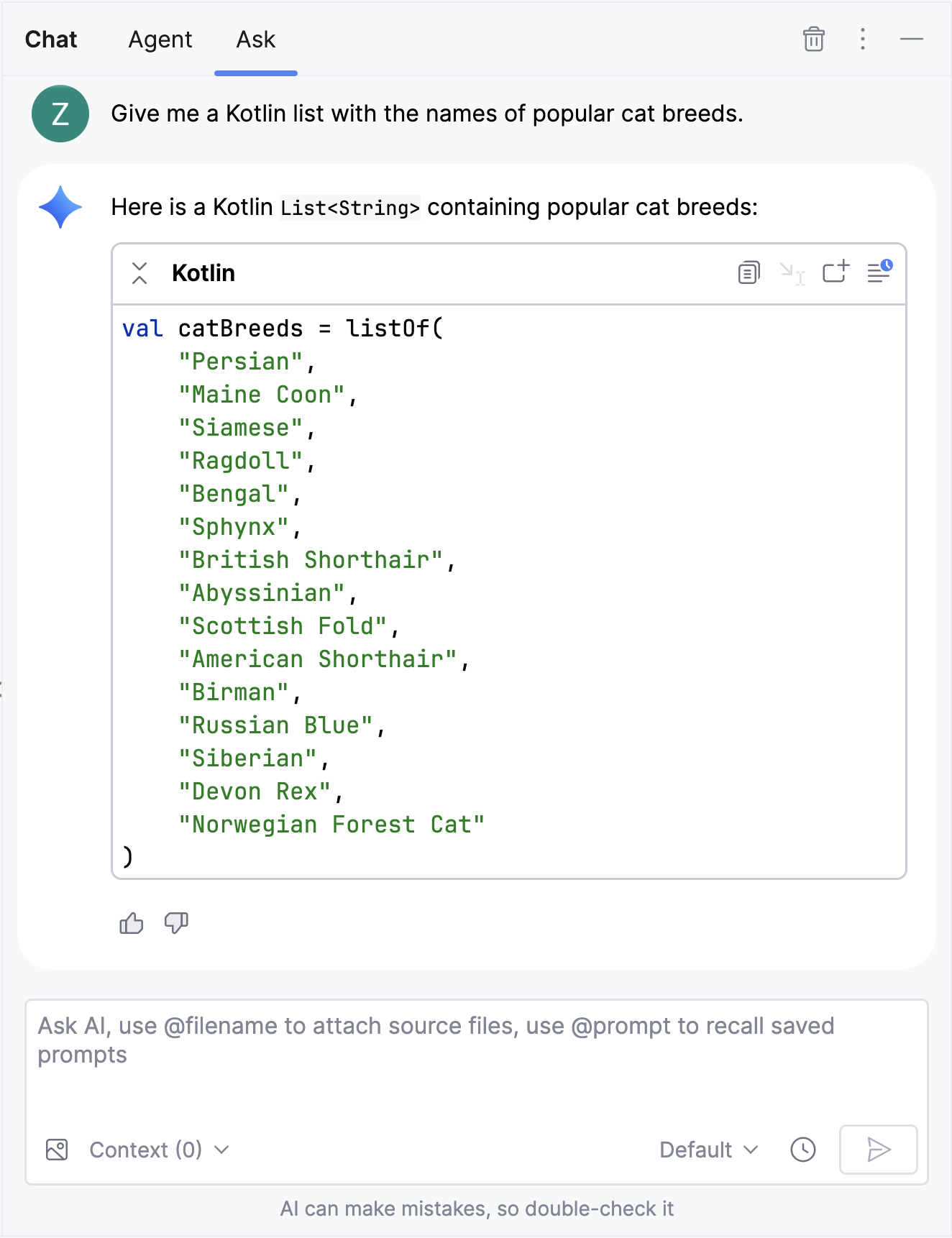

The Gemini Chat window is the main interface for interacting with Gemini within Android Studio. You can start using it by simply asking questions in the Ask tab about specific problems that you need help with. Open the Gemini Chat window by selecting the Gemini icon from the Android Studio side-navigation panel as follows:

Every response from Gemini is followed by these four options for your convenience:

- Copy the code to your clipboard to paste anywhere.

- Insert at Cursor in your current file.

- Insert in New Kotlin File to proceed with further development from there.

-

Explore in Playground, which creates a draft file named

gemini-playground.ktsto play around with the generated code.

Now, copy catBreeds and paste it within MainActivity.kt file.

Some tips for prompting Gemini:

- Describe the structure of the desired answer. For example: “Give me top keywords on Android AI as a Kotlin List”.

- Be specific, if you want to use certain approaches, APIs, or libraries, mention them in your prompt.

- Break down complex requests into a series of simpler questions. This will help generate more accurate output.

Next, you’ll learn how to use Agent Mode for more complex tasks.

Gemini Agent Mode

Gemini, designed for Android Studio’s Agent mode, is tailored to handle complex, multi-stage development tasks beyond what’s achievable through simple conversations. By describing a high-level goal, you can guide the agent to create and execute a plan. This plan involves invoking the necessary tools, making changes across multiple files, and iteratively fixing bugs. This agent-assisted workflow empowers you to tackle intricate challenges, ultimately accelerating your development process.

In Agent mode, your prompt is sent to the Gemini API with a list of available tools, which are like skills. These skills include file searching, file reading, text search within files, and using configured MCP servers. When you give the agent a task, it plans and determines the required tools. Some tools may need permission before use. Once granted, the agent uses the tool to perform the action and sends the result back to the Gemini API. Gemini processes the result and generates another response. This cycle continues until the task is complete.

These are the advantages of using Gemini Agent Mode over the Gemini Chat:

- Advanced Task Automation: Agent Mode is an experimental feature within Gemini in Android Studio that introduces Agentic AI capabilities. It allows developers to describe complex, multi-step goals in natural language, such as generating unit tests, performing complex refactors, extracting hardcoded strings, or implementing new screens from screenshots.

- Execution Planning and Guidance: The agent formulates an execution plan that can span multiple files and executes it under the developer’s direction. It utilizes various IDE tools for reading and modifying code, searching the codebase, and managing lengthy tasks.

- Human-in-the-Loop: Developers maintain control by reviewing, refining, and guiding the agent’s output at every step, with the option to accept or reject proposed changes. An “auto-approve” feature is available for faster iteration. The “human-in-the-loop” control is critical for maintaining quality and trust.

- Enhanced Reasoning for Complex Tasks: Leveraging a Gemini API key expands Agent Mode’s context window up to 1 million tokens with Gemini 2.5 Pro, enabling it to reason about more complex or long-running tasks and provide higher-quality responses.

- Extensibility: Agent Mode can interact with external tools via the Model Context Protocol (MCP), an open standard for AI models to use tools and communicate with other AI agents.

Hands-off to Agent Mode

To use the Gemini Agent Mode in Android Studio, follow these steps:

- Click Gemini in the side bar.

- Select the Agent tab.

- Describe the task you want the agent to execute.

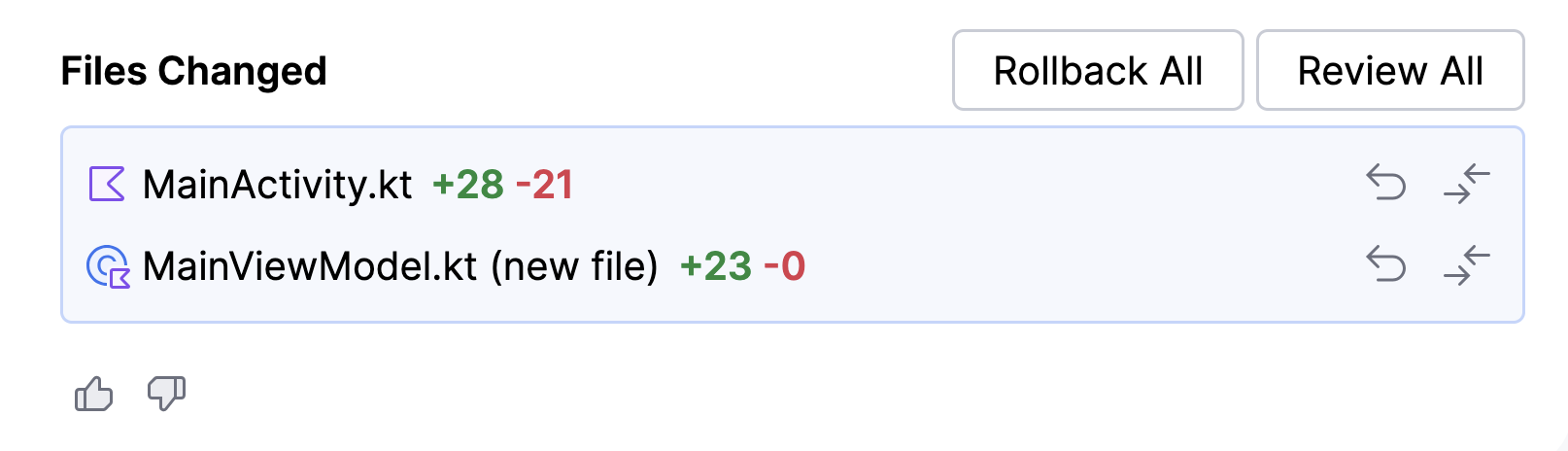

You must be wanting to show cat breeds (aka catBreeds) as a list in the UI at this point. Before doing so, you’ll want to create a ViewModel as a data source and attach it to the MainActivity. Let’s break this down into small, actionable items:

- Create a new class, MainViewModel, that extends

ViewModel; put it in a new file named after the class. - Move

catBreedsto the MainViewModel, and expose it throughStateFlow. - In the MainActivity, consume the value of

catBreedsto display it as a List view. - Notify users when they tap on any item on the list.

All these complex task you can execute with a single prompt in the Agent Mode in Gemini! Try using below prompt:

Create a ViewModel class named “MainViewModel” in a new File. Move

catBreedsto MainViewModel and display it as a List named “CatBreedsListScreen” in MainActivity.kt file. Show a toast message on item clicks.

Gemini Agent will go through the steps to accomplish the task. Once each step is complete, you’ll have the option to review and approve any changes. At the end, you’ll be given a summary of code changes with options to Rollback All or Review All.

After the code changes, the MainViewModel will be updated as something like below:

class MainViewModel : ViewModel() {

private val _catBreeds = MutableStateFlow<List<String>>(emptyList())

val catBreeds: StateFlow<List<String>> = _catBreeds

init {

_catBreeds.value = listOf(

"Siamese",

"Persian",

"Maine Coon",

"Ragdoll",

"Bengal",

"Abyssinian",

"Birman",

"Oriental Shorthair",

"Sphynx",

"Devon Rex",

"Himalayan",

"American Shorthair"

)

}

}

and the MainActivity will include the changes made by the Gemini agent:

class MainActivity : ComponentActivity() {

private val viewModel: MainViewModel by viewModels() // Added ViewModel

// catBreeds list is now removed from here

override fun onCreate(savedInstanceState: Bundle?) {

setTheme(R.style.AppTheme)

super.onCreate(savedInstanceState)

setContent {

KodecoSampleTheme {

Surface(modifier = Modifier.fillMaxSize(), color = MaterialTheme.colors.background) {

val catBreeds by viewModel.catBreeds.collectAsState()

val context = LocalContext.current

CatBreedsListScreen(

breeds = catBreeds,

onItemClick = { breed ->

Toast.makeText(context, breed, Toast.LENGTH_SHORT).show()

}

)

}

}

}

}

}

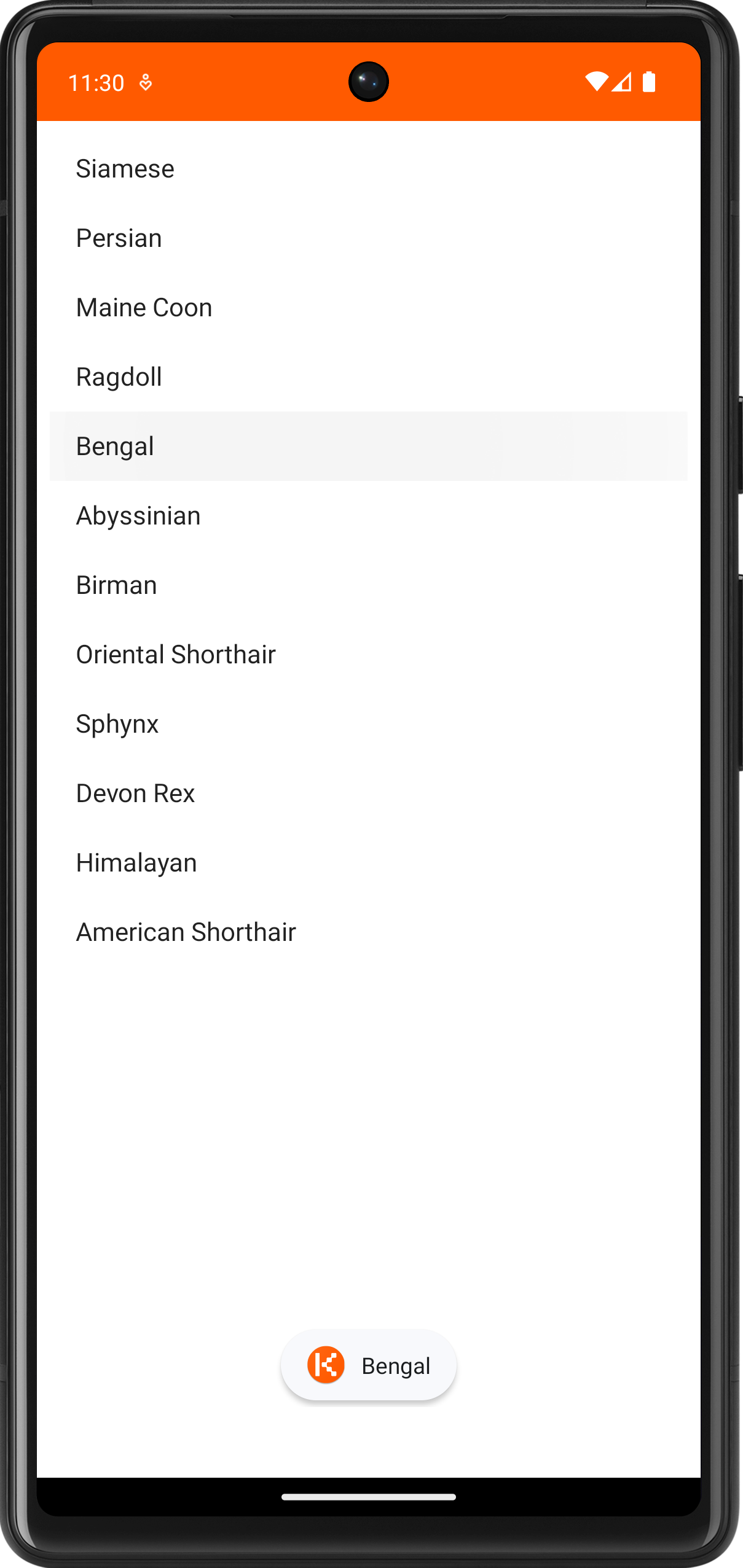

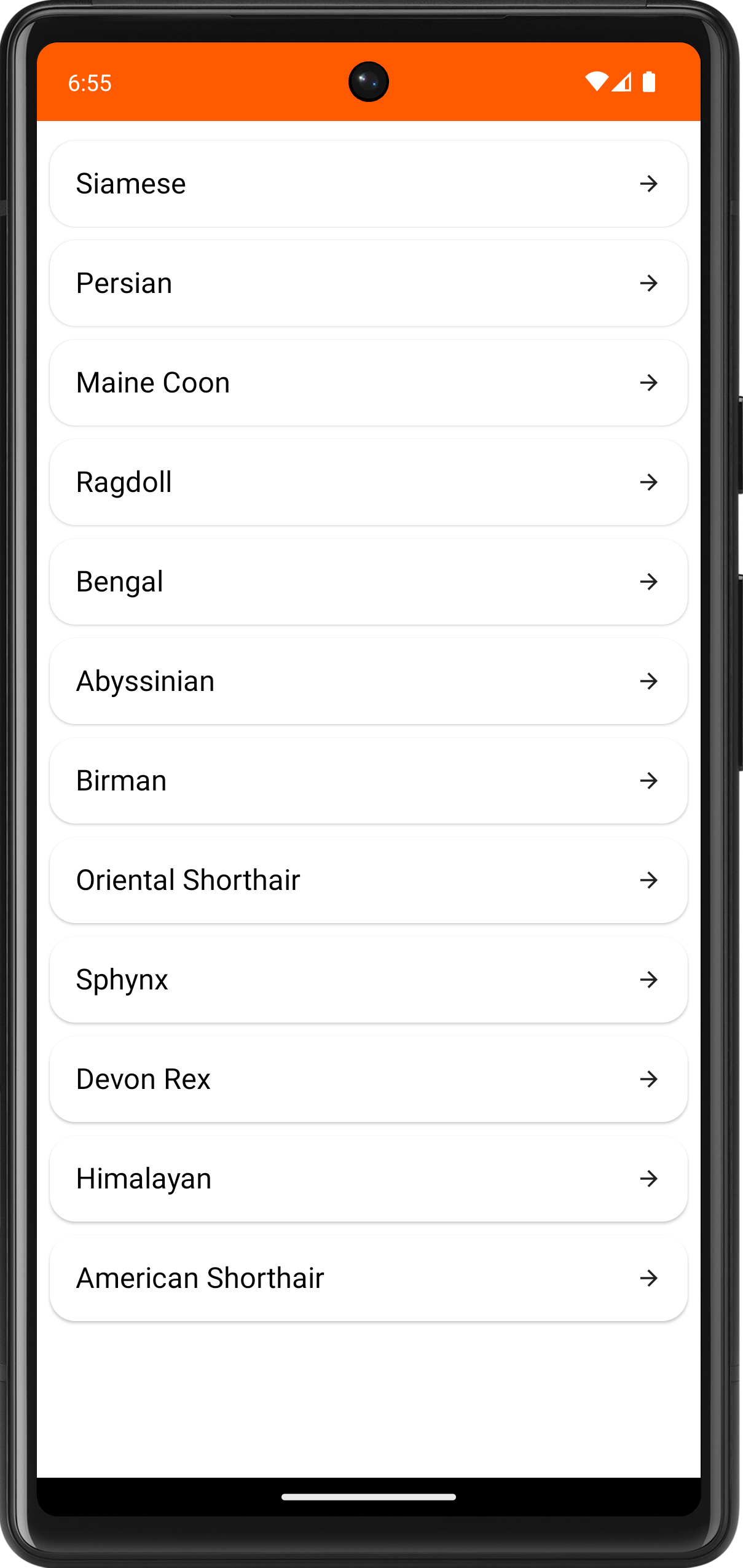

Build and run, the output will look like this:

Note: The Gemini Agent is continuously evolving, so the content and UI design generated by Gemini may differ in your case.

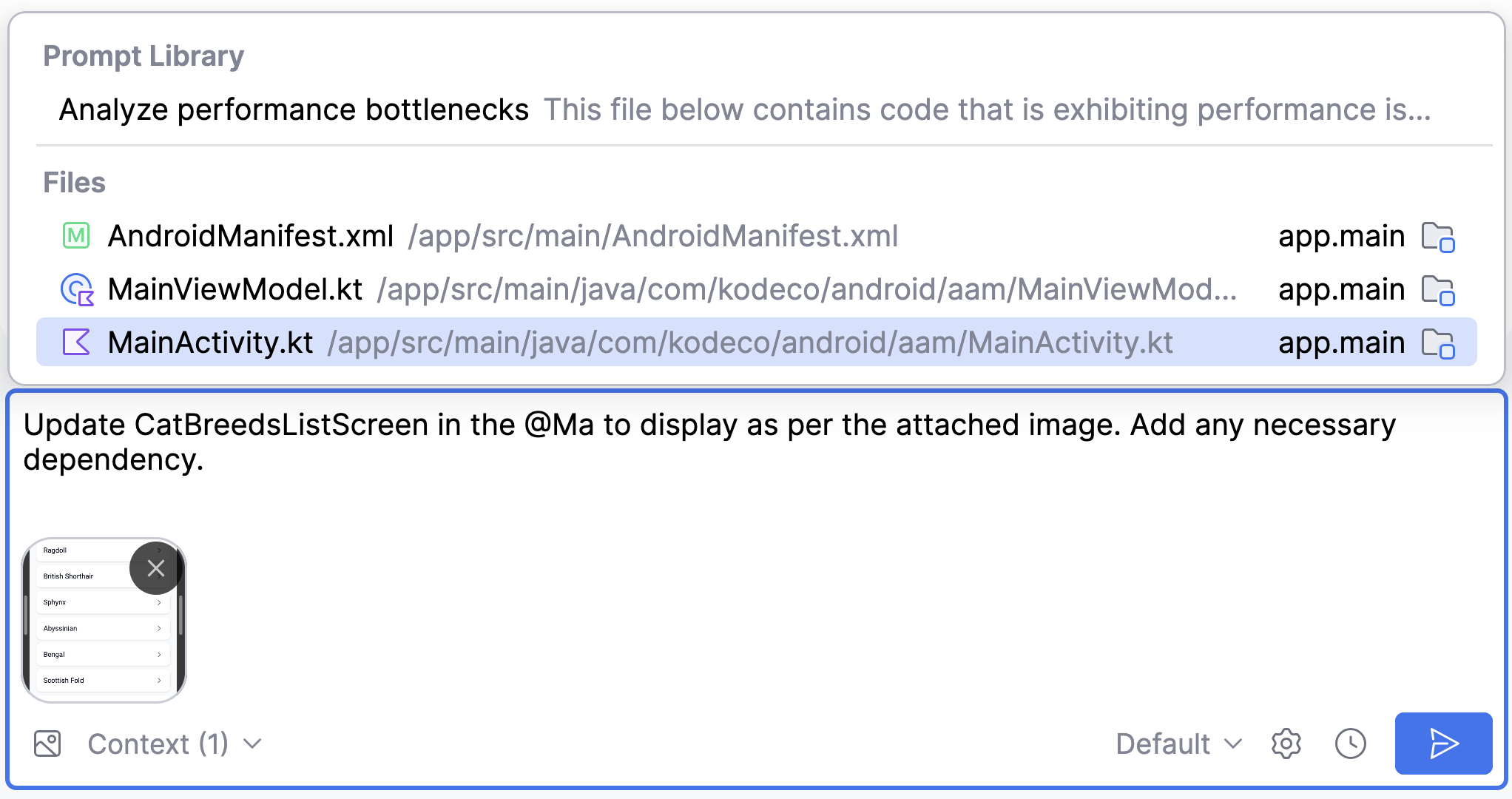

Transform UI with Gemini

Building that list with Gemini was easy, but you can do more! While developing your app, you may have a UI design or mockup ready, and you need to translate it into code.

Android Studio makes this easy for you with Gemini – try these steps:

-

Download a sample design image from here. The file is named styled_list.png.

-

Go to the Gemini panel (Agent mode) in Android Studio. Attach the styled_list.png image and write a prompt like this:

Use @ to provide file context to Gemini. You can select a specific file from the dropdown – select MainActivity.kt there.

Gemini will evaluate the attached image and update the given function in the specified file to reproduce the same design. It’ll even include any required libraries to achieve the expected styling!

The updated CatBreedsListScreen function may look like this:

@Composable

fun CatBreedsListScreen(breeds: List<String>, onItemClick: (String) -> Unit) {

if (breeds.isEmpty()) {

Text(text = "No cat breeds available.", modifier = Modifier.padding(16.dp))

return

}

LazyColumn(

modifier = Modifier.padding(vertical = 8.dp)

) {

items(breeds) { breed ->

Card(

modifier = Modifier

.fillMaxWidth()

.padding(vertical = 4.dp, horizontal = 8.dp)

.clickable { onItemClick(breed) },

shape = RoundedCornerShape(16.dp),

) {

Row(

modifier = Modifier

.padding(16.dp)

.fillMaxWidth(),

verticalAlignment = Alignment.CenterVertically,

horizontalArrangement = Arrangement.SpaceBetween

) {

Text(

text = breed,

style = TextStyle(

fontSize = 18.sp,

fontWeight = FontWeight.Normal,

color = Color.Black

)

)

Icon(

imageVector = Icons.AutoMirrored.Filled.ArrowForward,

contentDescription = "Go to details",

modifier = Modifier.height(16.dp)

)

}

}

}

}

}

Note: Sync the project with Gradle files from the IDE every time you add new dependencies.

The Gemini agent can help Compose Preview Generation as well.

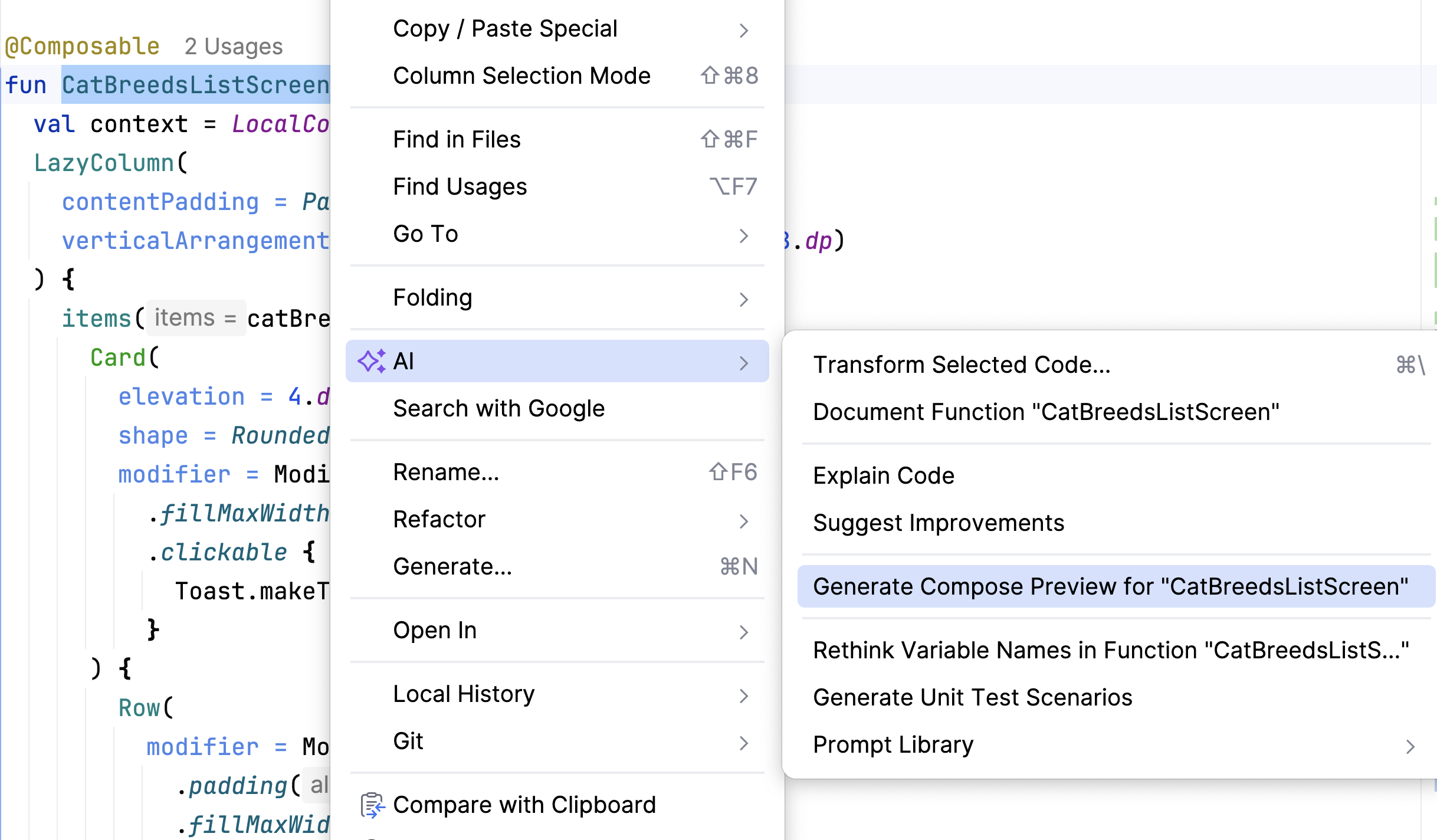

Put the cursor over CatBreedsListScreen Composable. Right click to open the context menu and select AI > Generate Compose Preview for CatBreedsListScreen.

The preview function will be generated as follows:

@Preview(showBackground = true)

@Composable

fun CatBreedsListScreenPreview() {

KodecoSampleTheme {

CatBreedsListScreen(

breeds = listOf("Abyssinian", "Aegean", "American Bobtail"),

onItemClick = {}

)

}

}

Simple yet powerful features! Using those you performed:

-

Image attachment in Gemini chat.

-

Providing File context in the chat prompt.

-

Transforming an existing UI with Gemini agent.

-

Generating Compose Preview with Gemini.

Now the UI is transformed and the app is ready to try out. Build and run, the updated UI will be displayed:

Testing and Documenting using Gemini

Once your feature is ready, you can command Gemini Agent to help with testing and documentation. These are the tasks you’ll want to perform next:

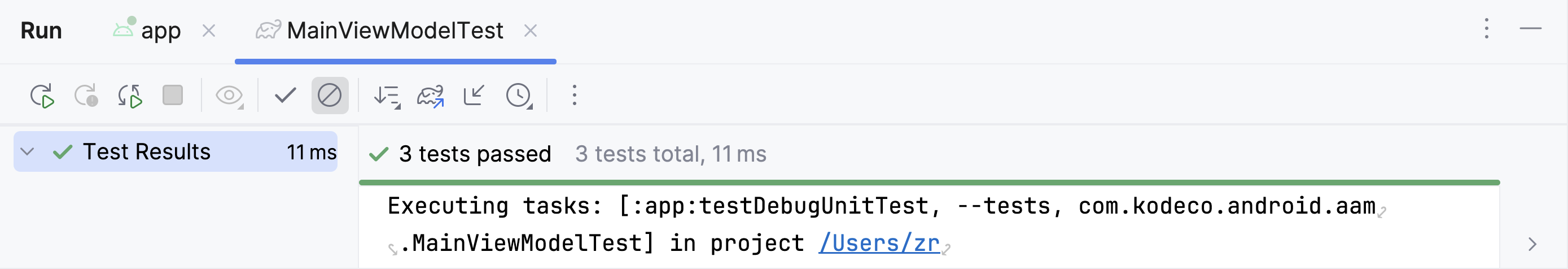

- Create a new JUnit test file named MainViewModelTest to test MainViewModel.

- Add necessary dependencies for testing.

- Add all possible test-cases.

- Document code changes.

Generating Unit Tests

Insert this prompt into the Gemini Agent to add the required test dependencies and generate the MainViewModelTest file:

Create tests for @MainViewModel.kt. Add all necessary test cases in a JUnit test file

You’ll see the Gemini Agent add a JUnit test file, MainViewModelTest in the test/java/com/kodeco/android/aam/ directory and generate test cases. Once you approve the changes, you’ll be able to run tests in the MainViewModelTest file, which looks like this:

package com.kodeco.android.aam

import org.junit.Assert.assertEquals

import org.junit.Before

import org.junit.Test

class MainViewModelTest {

private lateinit var viewModel: MainViewModel

@Before

fun setUp() {

viewModel = MainViewModel()

}

@Test

fun `catBreeds initializes with correct list of breeds`() {

val expectedBreeds = listOf(

"Siamese",

"Persian",

"Maine Coon",

"Ragdoll",

"Bengal",

"Abyssinian",

"Birman",

"Oriental Shorthair",

"Sphynx",

"Devon Rex",

"Himalayan",

"American Shorthair"

)

assertEquals(expectedBreeds, viewModel.catBreeds.value)

}

@Test

fun `catBreeds initial list is not empty`() {

assert(viewModel.catBreeds.value.isNotEmpty())

}

@Test

fun `catBreeds initial list size is correct`() {

assertEquals(12, viewModel.catBreeds.value.size)

}

}

Run MainViewModelTest and check whether all the tests has been passed:

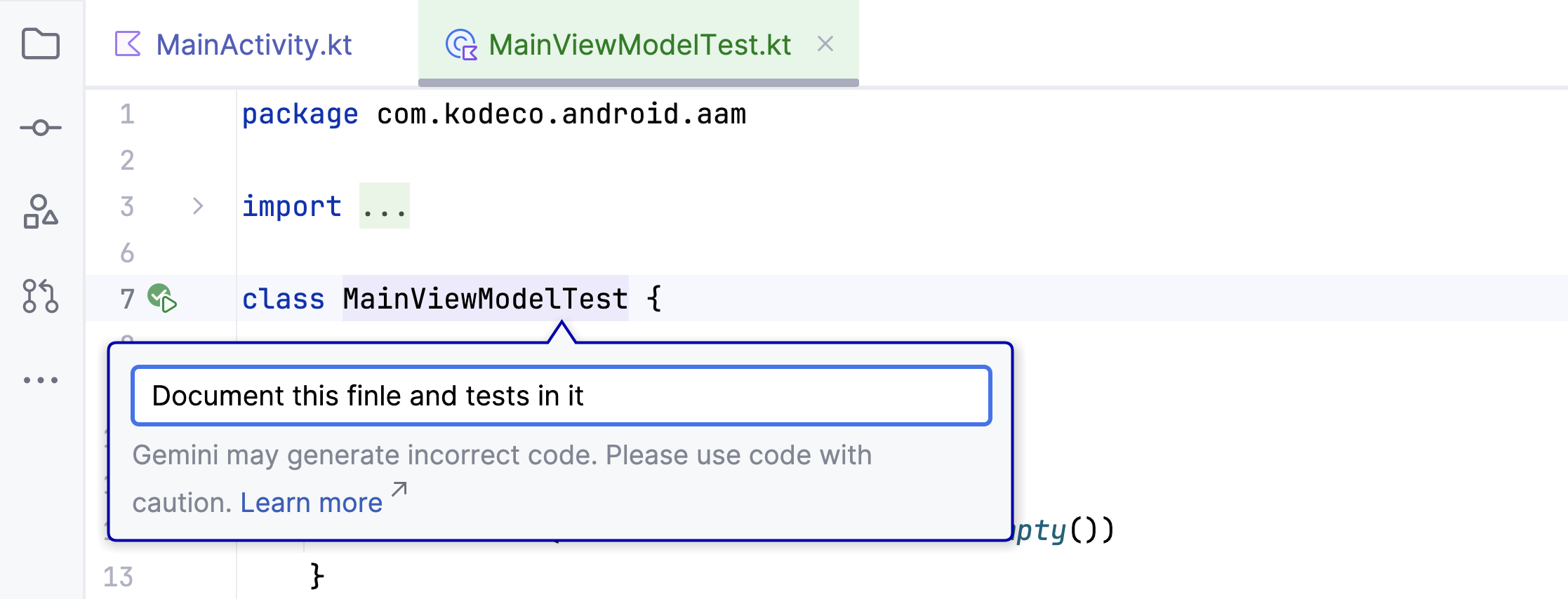

Adding Documentation

Gemini makes adding documentation plain and simple – you can just command Gemini where you want to add documentation and it’ll prepare the documentation using the right format.

To try it out,

- Select

MainViewModelTest - Press

Command + \on Mac orAlt + \on Windows to bring up inline Gemini prompt. - Type

Document this file and tests in itin the prompt

Select Accept and Close when Gemini completes the task. The documented file will look like this:

package com.kodeco.android.aam

import org.junit.Assert.assertEquals

import org.junit.Before

import org.junit.Test

/**

* Unit tests for the [MainViewModel].

* This class contains tests to verify the functionality of the [MainViewModel],

* specifically focusing on the initial state of the `catBreeds` LiveData.

*/

class MainViewModelTest {

private lateinit var viewModel: MainViewModel

/**

* Sets up the test environment before each test.

* Initializes a new instance of [MainViewModel].

*/

@Before

fun setUp() {

viewModel = MainViewModel()

}

/**

* Tests if the `catBreeds` LiveData is initialized with the correct list of cat breeds.

* It compares the actual list of breeds from the ViewModel with an expected list.

* This ensures that the initial data for cat breeds is loaded as expected.

*/

@Test

fun `catBreeds initializes with correct list of breeds`() {

val expectedBreeds = listOf(

"Siamese",

"Persian",

"Maine Coon",

"Ragdoll",

"Bengal",

"Abyssinian",

"Birman",

"Oriental Shorthair",

"Sphynx",

"Devon Rex",

"Himalayan",

"American Shorthair"

)

assertEquals(expectedBreeds, viewModel.catBreeds.value)

}

/**

* Tests if the initial list of `catBreeds` is not empty.

* This is a basic sanity check to ensure that the ViewModel initializes

* `catBreeds` with some data.

*/

@Test

fun `catBreeds initial list is not empty`() {

assert(viewModel.catBreeds.value.isNotEmpty())

}

/**

* Tests if the size of the initial list of `catBreeds` is correct.

* It verifies that the number of breeds loaded initially matches the expected count.

* In this case, it checks if there are 12 cat breeds loaded.

*/

@Test

fun `catBreeds initial list size is correct`() {

assertEquals(12, viewModel.catBreeds.value.size)

}

}

This helps you focus on problem solving and code quality, rather than spending your time on chores.

Developer Control and Safety

Gemini in Android Studio offers developers a robust set of controls and safety features to ensure privacy, responsible AI usage, and effective integration into their workflows. It includes safety controls and mechanisms for feedback, and by default, does not see code in the editor unless you opt in for higher-quality responses and experimental features.

Developer Control over Data Sharing and Context Awareness

-

Explicit Consent: Your code is never shared with Gemini servers without your explicit consent. By default, Gemini only uses your prompts and conversation history.

-

Opt-in for Context Awareness: To enable higher-quality responses and features like AI code completion, you can opt-in to share context from your codebase. This allows Gemini to understand your project-specific code, file types, and other relevant information.

-

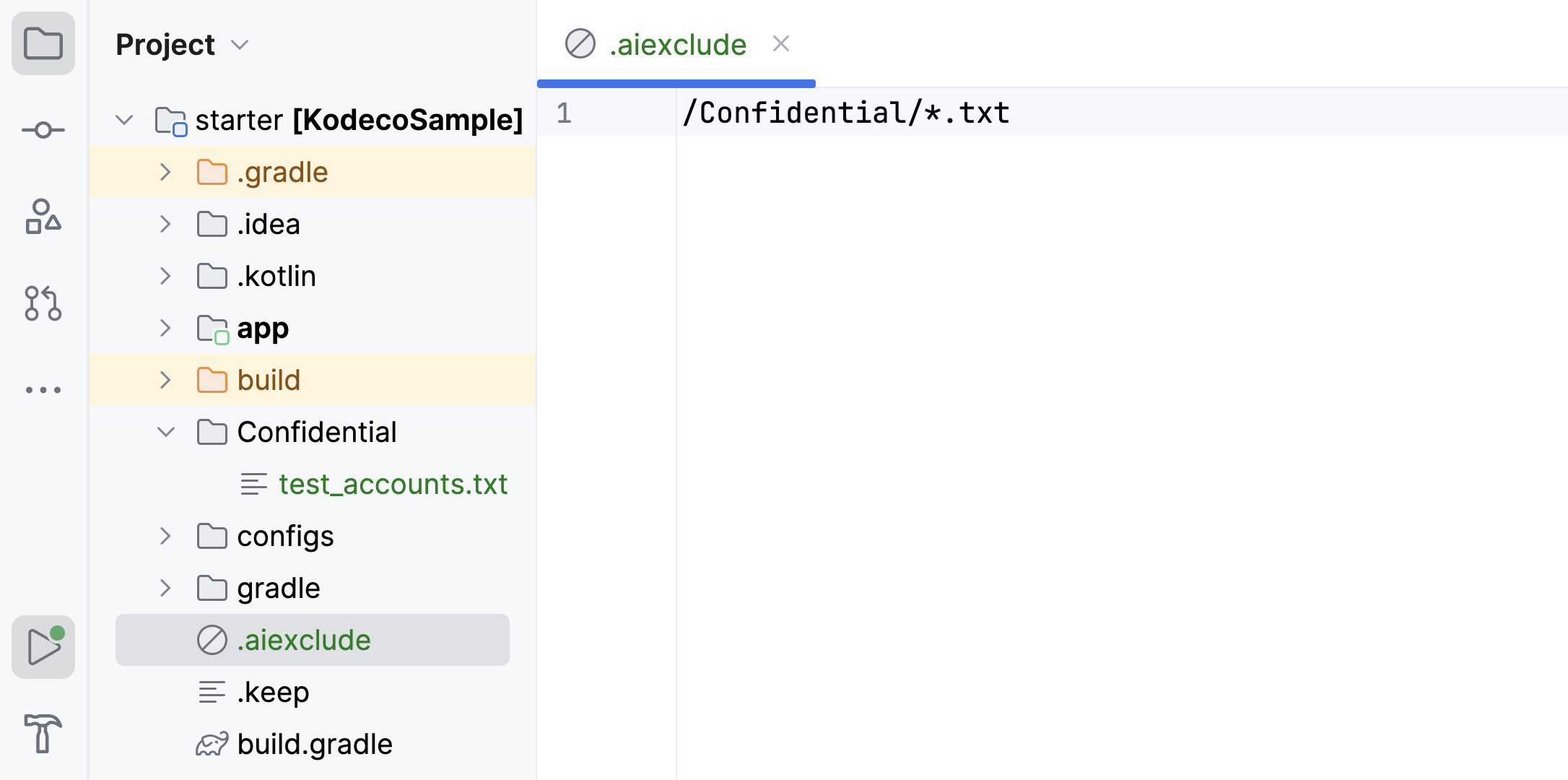

Granular Control with

.aiexcludefiles: Similar to.gitignore, you can create.aiexcludefiles in your project directories to precisely control which parts of your codebase Gemini can access. This is crucial for protecting sensitive code or data.For example, adding an

.aiexcludefile like below will prevent Gemini from accessing any.txtfiles in the Confidential folder (such as,test_accounts.txt).

Note: You can learn more about configuring the

.aiexcludefile in the Responsible AI section of the official Android Documentation.

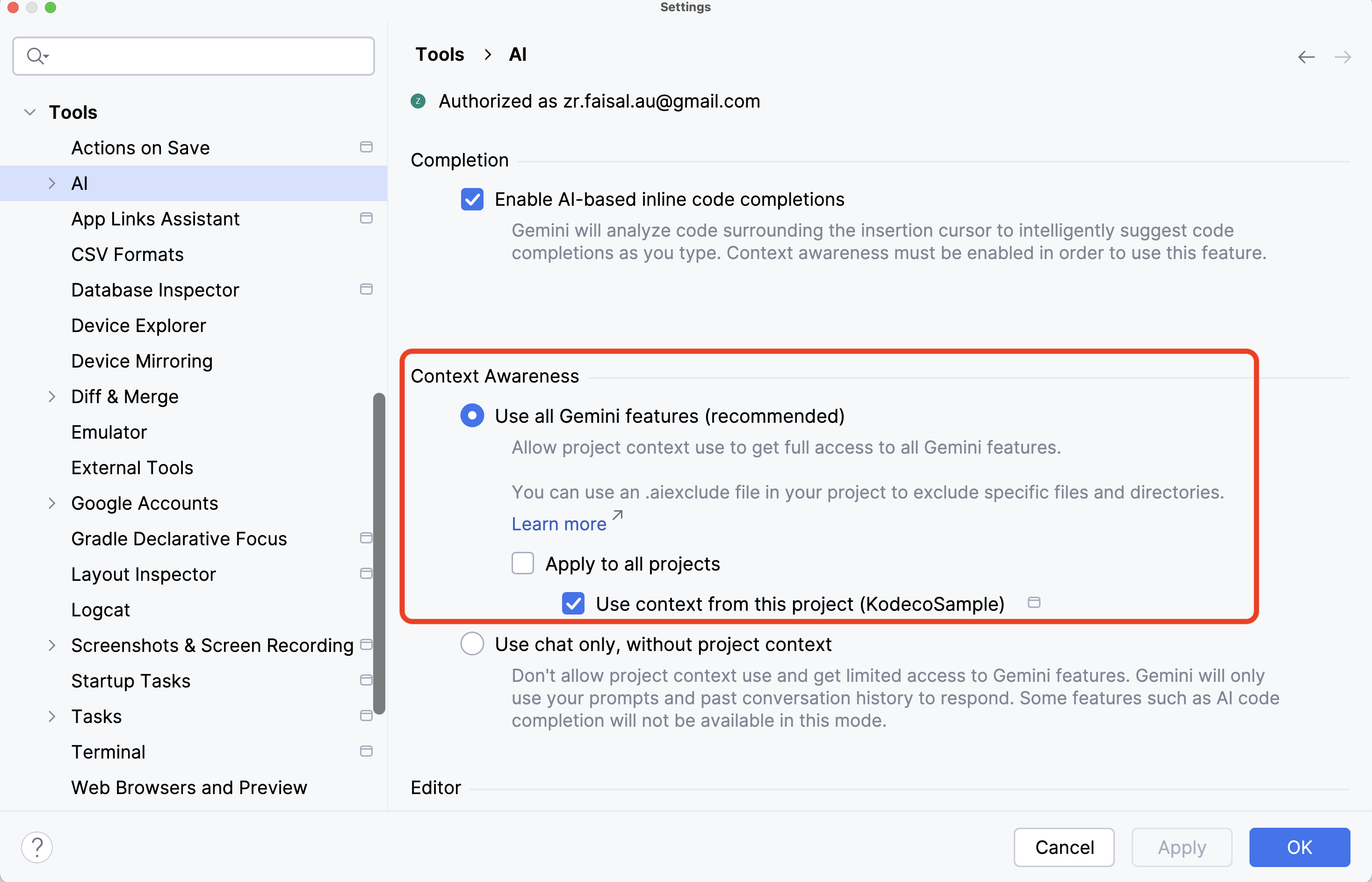

-

Global and Project-Specific Settings: Android Studio provides settings under

Android Studio > Settings... > Tools > AIon Mac (orFile > Settings > Tools > AIon Windows) to manage context awareness globally or on a per-project basis. You can choose to allow all project code, specific projects, or no project code at all.

- Transparency on Data Use: Google clearly outlines how your data is collected and used. While your code isn’t shared without consent, usage statistics, prompts, responses, and feedback signals might be used to improve Gemini. For individual users, code explicitly entered into the chat may be used for training. For businesses using Gemini Code Assist, code is not used for model training.

Safety Settings and Content Filtering

-

Adjustable Safety Filters: During the prototyping stage, you can adjust safety settings across five categories to determine if your application requires more or less restrictive configurations:

- Harassment

- Hate speech

- Sexually explicit

- Dangerous

- Civic integrity

-

Built-in Protections: The Gemini API, which powers Gemini in Android Studio, has built-in protections against core harms like content that endangers child safety. These are always blocked and cannot be adjusted.

-

Probability-Based Blocking: The API categorizes the probability of content being unsafe (HIGH, MEDIUM, LOW, NEGLIGIBLE) and blocks content based on this probability, not the severity.

-

Threshold Control: You can set thresholds to block content based on these probability levels:

BLOCK_ONLY_HIGHBLOCK_MEDIUM_AND_ABOVEBLOCK_LOW_AND_ABOVEBLOCK_NONE

here’s some sample code on defining Adjustable Safety Filters:

// Example: Block content with a MEDIUM or HIGH probability of being harassment val hateSpeechSafety = SafetySetting( HarmCategory.HARASSMENT, HarmBlockThreshold.BLOCK_MEDIUM_AND_ABOVE ) // Initialize the GenerativeModel with the safety settings val generativeModel = GenerativeModel( modelName = "gemini-2.5-flash", apiKey = BuildConfig.GEMINI_API_KEY, safetySettings = listOf( hateSpeechSafety, dangerousContentSafety ) )You’ll know more on configuring and using Gemini models on later chapters.

-

Safety Filtering per Request: When you make a request to Gemini, the content is analyzed and assigned a safety rating. You can configure how the system responds to different safety ratings.

-

Responsible AI Principles: Gemini is developed with Google’s AI Principles in mind, emphasizing responsible AI development.

Developer Oversight and Iteration in AI-Assisted Workflows

-

Review and Acceptance of Suggestions: When Gemini proposes code changes (e.g., for refactoring, generating code, or fixing errors), developers are firmly in control. They can review the suggested changes as a code diff and choose to accept or reject them.

-

Agent Mode with Developer Approval: Agent Mode allows Gemini to handle complex, multi-stage development tasks. However, the agent waits for the developer to approve or reject each change, ensuring human oversight throughout the process.

-

Iterative Feedback: Developers can provide feedback to Gemini and ask it to iterate on its suggestions, refining the output to meet their specific needs.

-

Transparency in Agent Actions: When Agent Mode is active, Gemini shows the plan it intends to follow and the IDE tools it needs to complete each step.

Business and Enterprise-Grade Features (Gemini Code Assist)

-

Enhanced Privacy and Security: For businesses, Gemini Code Assist (a subscription service) offers additional privacy and security features, including:

-

No Code Training: Customer code, inputs, and generated recommendations are explicitly not used to train shared models.

-

Data Ownership: Customers retain control and ownership of their data and intellectual property.

-

Security Features: Integration with Private Google Access, VPC Service Controls, and Enterprise Access Controls with granular IAM permissions.

-

IP Indemnification: Google provides generative AI IP indemnification, safeguarding organizations against third-party claims of copyright infringement related to AI-generated code.

-

Customization for Organizational Practices: Enterprise users can connect Gemini to their GitHub, GitLab, or BitBucket repositories (including on-premise) to allow Gemini to understand their internal codebases, best practices, and preferred frameworks, leading to tailored and more relevant suggestions.

Conclusion

Gemini in Android Studio is designed to be a powerful AI assistant that empowers developers while prioritizing their control, code privacy, and the responsible use of AI. It provides a spectrum of configurable options to balance the benefits of AI assistance with the need for security and adherence to organizational policies.

Google is investing in building more Agentic experiences for Gemini in Android Studio, promising more functionality in future releases. The progression from Gemini as a “coding companion” offering suggestions to Agent Mode, which can “create an execution plan” and “execute under your direction”, illustrates a shift in the nature of developer-AI collaboration. It moves from a reactive assistant to a proactive, delegated partner. This implies a future where developers spend less time on manual coding and more time on high-level design, problem definition, and validating AI-generated solutions.